Steve Oghumu, PhD, selected for Provost's Midcareer Scholars: Scarlet and Gray Associate Professor Program

As part of one of the largest academic medical centers in the country, the college combines interdisciplinary medical education with cutting-edge research and science-based patient care to transform the health of our communities. This page serves as a snapshot of the wonderful highlights the Ohio State College of Medicine experienced in fiscal year 2025. From our recognition in U.S. News & World Report to receiving millions of extramural research funding to lead breakthroughs and innovative discoveries, it was a tremendous year!

Research is an integral part of what makes Ohio State such a force in the global medical community. Our investment in research and commitment to breakthrough science are how we’re developing innovative solutions for tomorrow’s health challenges.

A landmark drug discovery has led to a new clinical trial that may benefit patients with any solid tumor or non-Hodgkin lymphoma with few remaining treatment options.

Advances in artificial intelligence continue to revolutionize scientific discovery and assist researchers’ efforts to develop earlier disease detection and drug discoveries.

With an innovative curriculum, world-class faculty and state-of-the-art facilities, we are preparing our learners to meet the challenges of the continually evolving field of medicine.

Immersive technology allows learners to virtually explore anatomical details with astonishing clarity, reshaping the way health science students learn.

Exposing and inspiring young minds early in education can influence their future while simultaneously strengthening the future of health care.

As one of America’s top-ranked academic medical centers, our mission is to improve health in Ohio and across the world through innovations and transformation in research, education, patient care and community engagement.

His visit to the mobile lung cancer screening unit saved his life; it caught his cancer early leading to innovative treatment and care.

Ohio State’s Diabetes in Pregnancy Program has pioneered advancements to improve outcomes for thousands with type 1, type 2 and gestational diabetes.

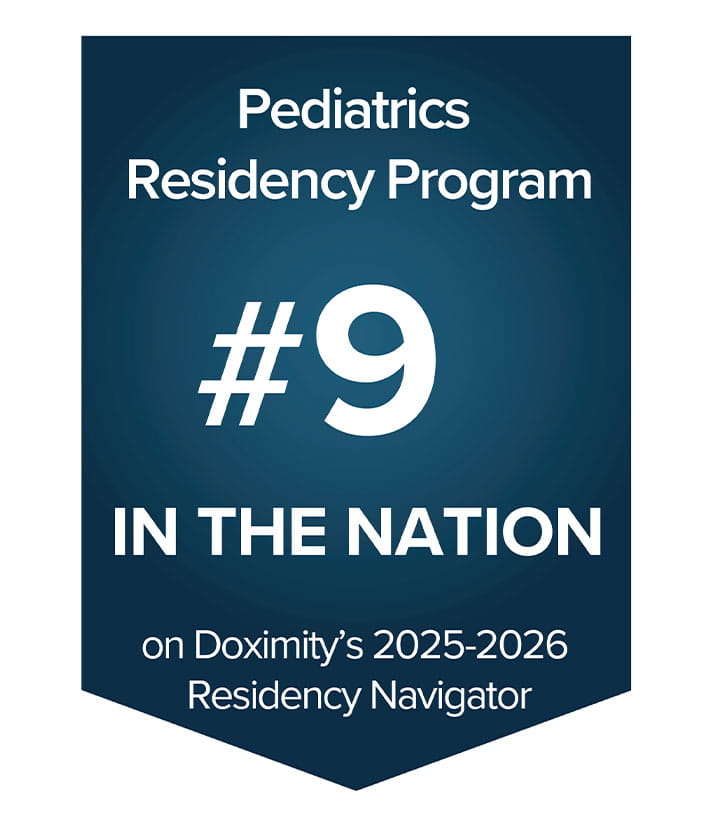

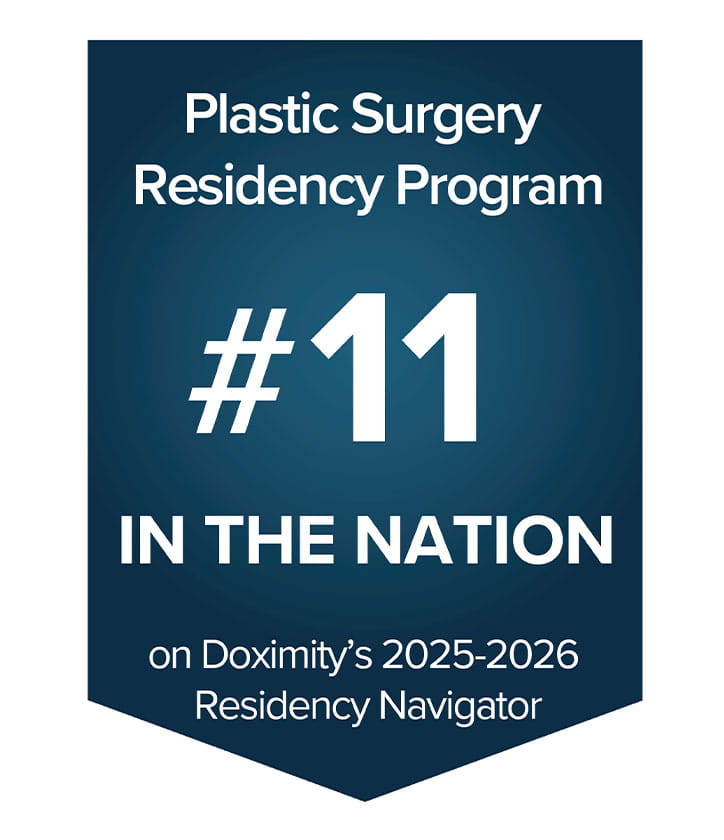

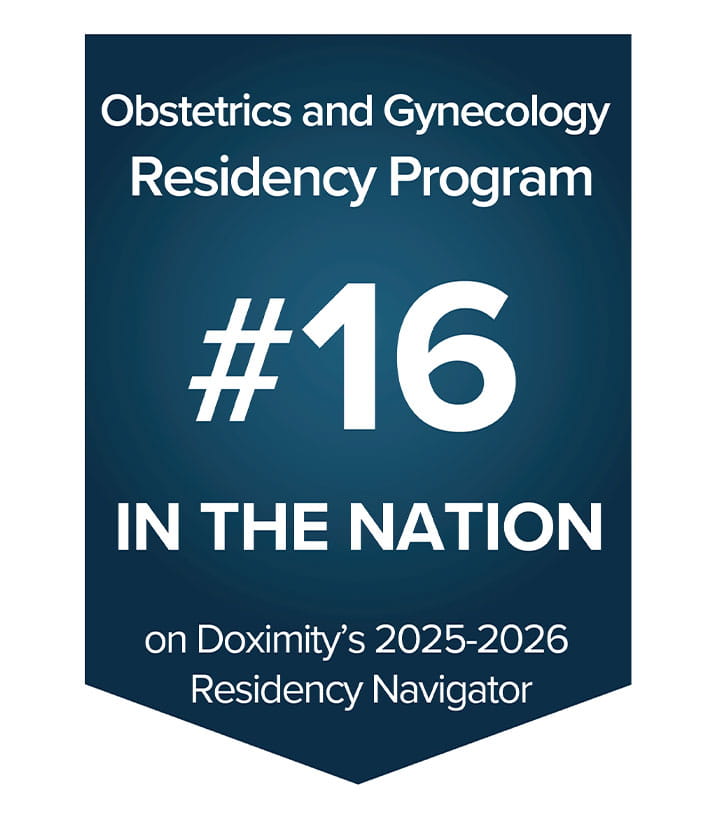

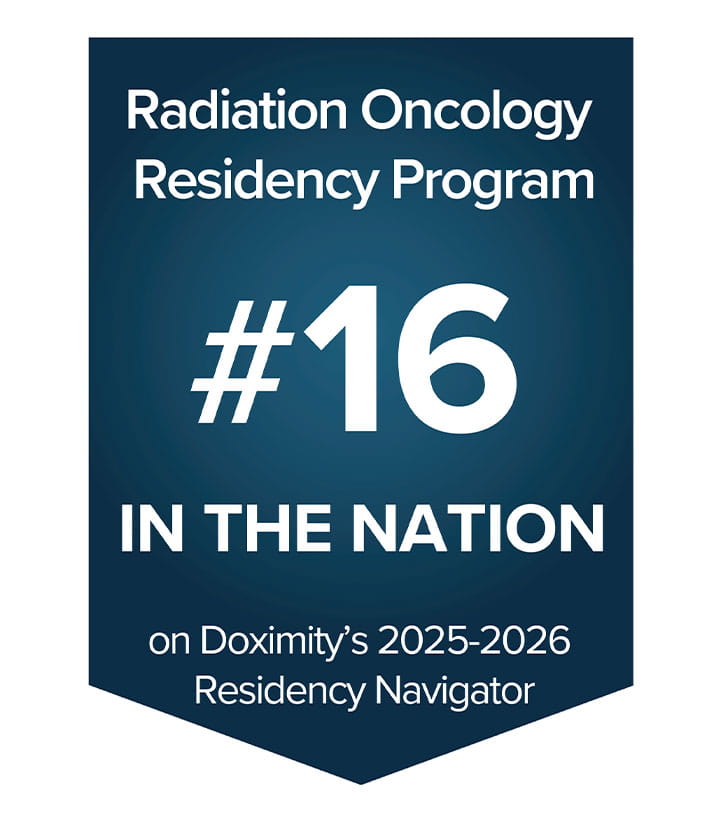

Train at one of the nation’s largest academic health centers.

Tier 1 for Research

Tier 2 for Primary Care

Number 4 for Physical Therapy Doctorate

Number 9 for Occupational Therapy Doctorate

Learn more about education and admission